Hugging Face is the leading community and data science platform for open-source machine learning, hosting over 800,000 models. Its Enterprise Hub provides managed infrastructure and advanced security for businesses to host and share models internally or publicly.

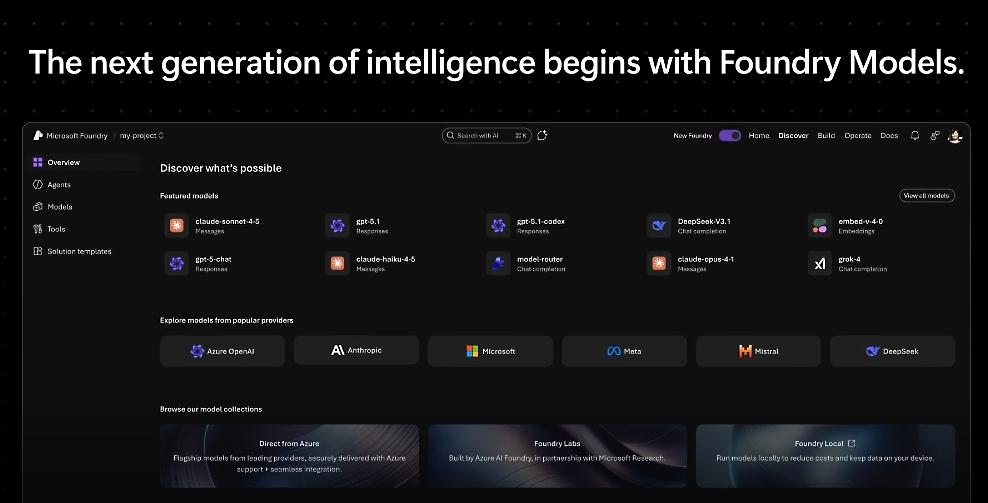

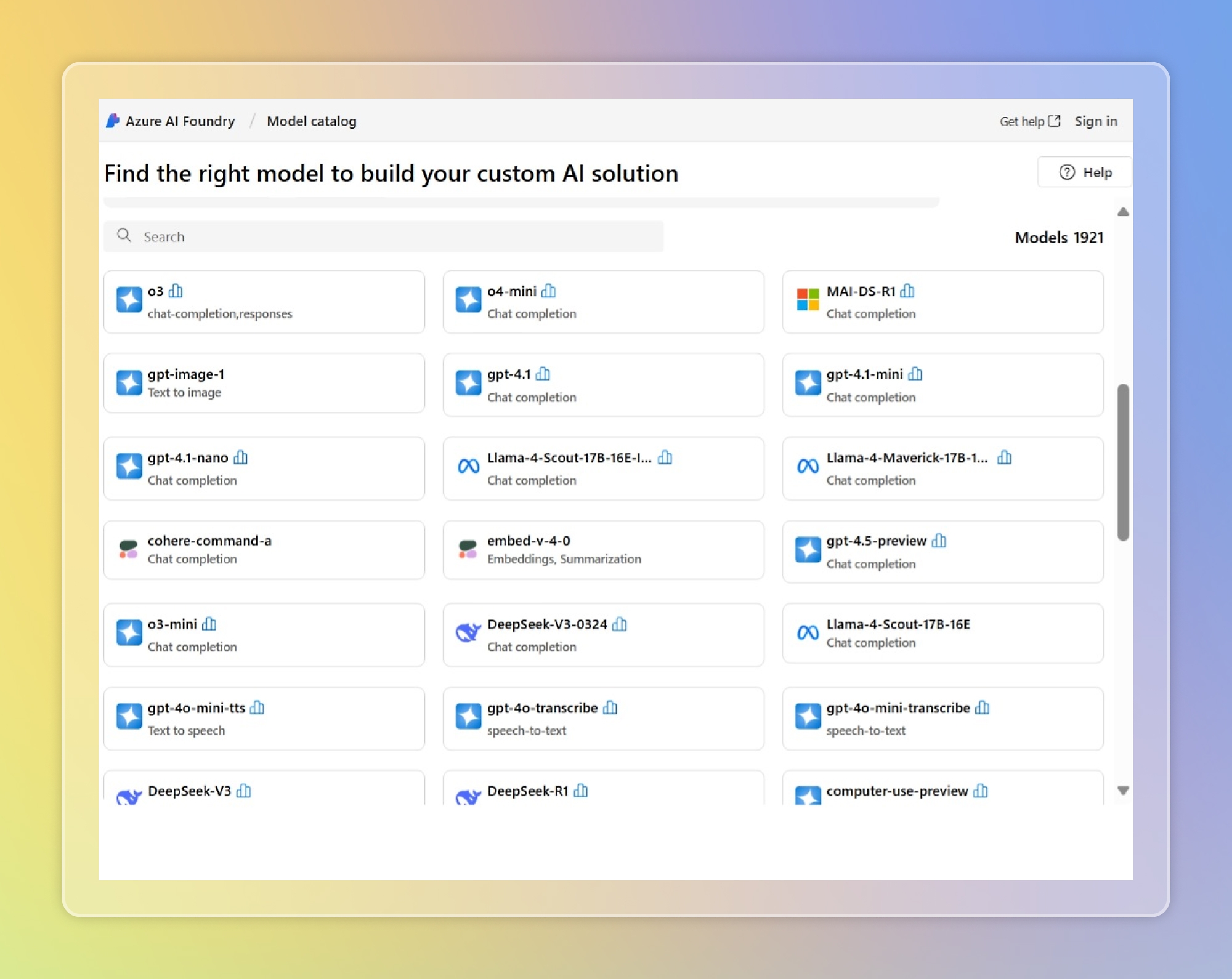

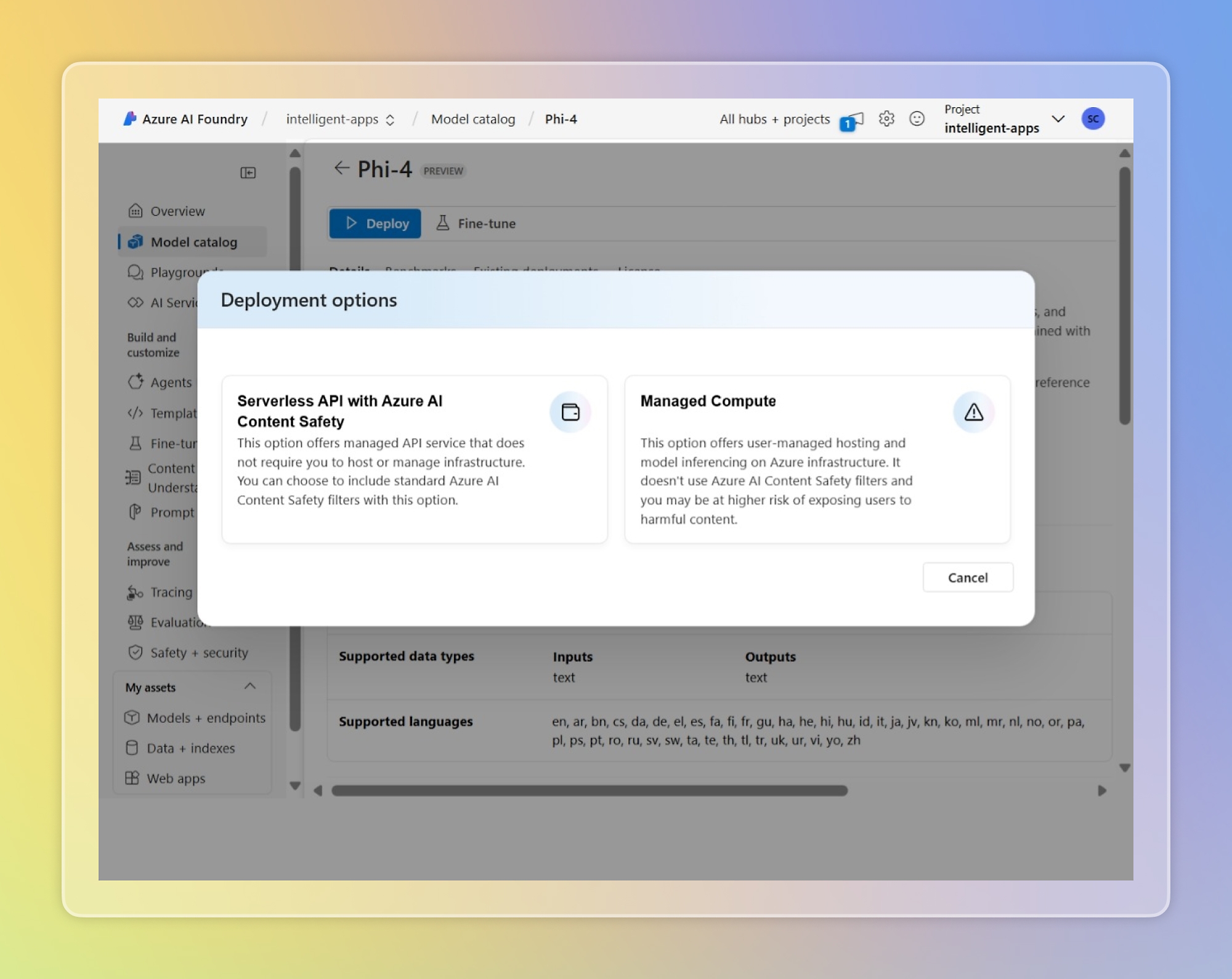

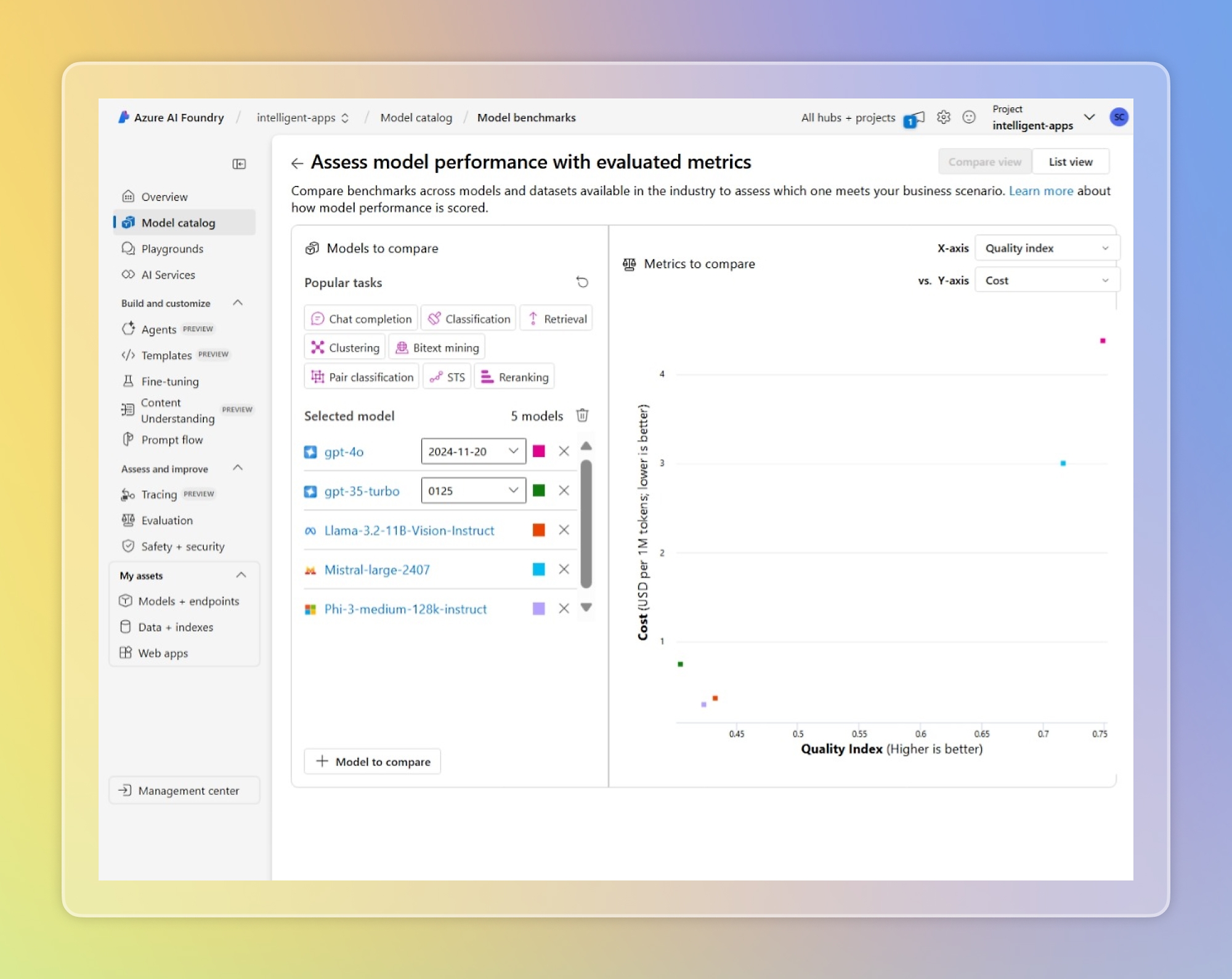

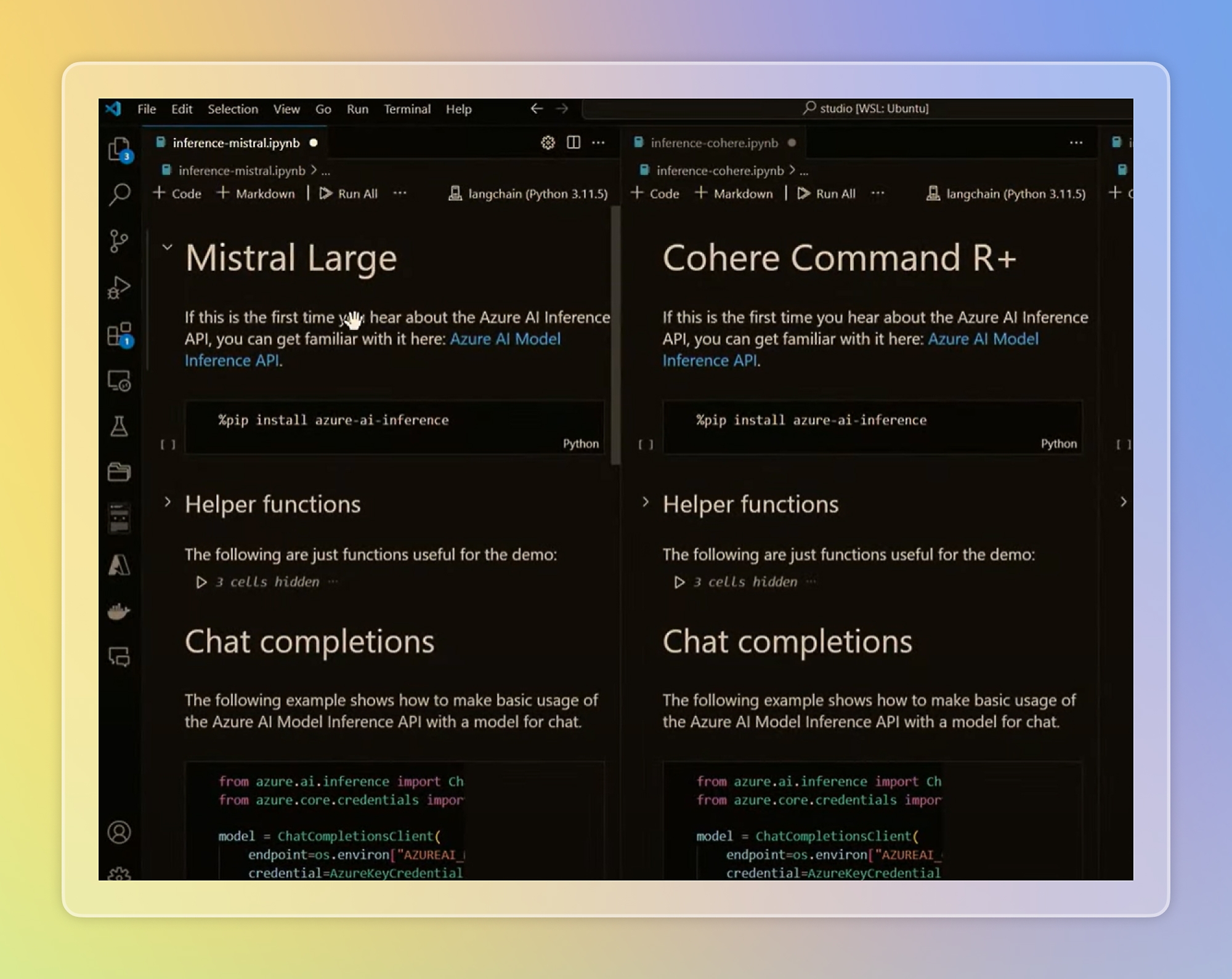

Azure AI Foundry (formerly Azure AI Studio) is Microsoft's primary enterprise hub for model discovery, offering over 11,000 models from both Microsoft and third-party partners. It supports diverse access modes including serverless APIs and managed compute with unified security and compliance.

Databricks Mosaic AI is an integrated platform for building, deploying, and governing generative AI applications. It offers Mosaic AI Model Serving, which allows businesses to serve open-source and proprietary foundation models with enterprise-grade quality and reliability.

Google Vertex AI Model Garden provides a centralized repository for discovering and deploying both Google-made (Gemini, PaLM) and third-party foundation models. It is designed for enterprise-grade ML development with deep integration into the Google Cloud ecosystem.

Anthropic, Meta, Mistral, Cohere, AI21 Labs, Stability AI

https://aws.amazon.com/bedrock/marketplace/

Amazon Bedrock is AWS's fully managed service that offers a choice of high-performing foundation models from leading AI companies via a single API. It integrates tightly with other AWS services like SageMaker and provides features for model evaluation, guardrails, and knowledge bases.

Snowflake Cortex is a managed service that provides instant access to foundation models and LLM-based functions within the Snowflake Data Cloud. It enables users to perform complex AI tasks on their governed data without moving it out of the Snowflake security perimeter.

IBM watsonx.ai is an enterprise studio that enables developers to train, validate, tune, and deploy both IBM's Granite models and third-party foundation models. It emphasizes AI governance and ethical AI across the model lifecycle.

Oracle Cloud Infrastructure (OCI) Generative AI is a fully managed service that provides a set of state-of-the-art foundation models for various use cases. It offers on-demand and dedicated AI cluster options for serving third-party models from vendors like Cohere and Meta.

["Meta","Mistral AISource: 📄 **https://azure.microsoft.com/en-us/products/ai-foundry/models**\n\nThis is the Trace Id: 58dc8f6f02056ada2b8c6fffa4078e2f\n\nSkip to main content\n\nIntroducing MAI models in Microsoft Foundry.\n\n[Read the blog](https://go.microsoft.com/fwlink/?linkid=2359711&clcid=0x409)\n\n\n\n# Foundry Models\n\nFind the right model from exploration to deployment all in one place.\n\n\n\nAccelerate innovation with popular models from Microsoft, OpenAI, Anthropic, Cohere, DeepSeek, Mistral AI, Meta and more.\n\n[Get started with Azure](https://azure.microsoft.com/en-us/pricing/purchase-options/azure-account/) [Create with Foundry Models](https://go.microsoft.com/fwlink/?linkid=2335239&clcid=0x409)\n\n\n\n[Watch video: Azure AI Foundry Model video](https://azure.microsoft.com/en-us/products/ai-foundry/models#modal-1)\n\n\n\nOVERVIEW\n\n## Smarter model selection starts here\n\n- ### Find the right model for every use case\n\n\n\n\n\n\n\n\n\n\n\nInnovate faster using more than 11,000+ models packed for out-of-the-box-use and shared computer resources.\n\n\n\n\n\n\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2335239&clcid=0x409)\n\n\n\n\n\n\n\n- ### Deploy models where you need them\n\n\n\n\n\n\n\n\n\n\n\nEasily integrate AI models into your applications without having to provision or manage infrastructure.\n\n\n\n\n\n\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2335239&clcid=0x409)\n\n\n\n\n\n\n\n- ### Optimize model selection\n\n\n\n\n\n\n\n\n\n\n\nAnalyze model metrics with standard datasets. Deploy model router to optimize costs and performance at runtime.\n\n\n\n\n\n\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2271933&clcid=0x409)\n\n\n\n\n\n\n\n- ### Swap and compare models easily\n\n\n\n\n\n\n\n\n\n\n\nEasily switch models and compare performance with the Azure AI model inference API.\n\n\n\n\n\n\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316452&clcid=0x409)\n\n\n\n\n\n\n\n\n\n\nMODELS\n\n## Choose from more than 11,000+ models\n\nFoundry Models offer a rich and diverse collection of models designed to meet every enterprise AI need.\n\n[Browse the catalog](https://go.microsoft.com/fwlink/?linkid=2335239&clcid=0x409)\n\nFoundry ModelsModels from partners and community\n\nPrevious\n\nNext\n\n\n\n### OpenAI\n\nFoundation models that exceed benchmark performance across image, video, and text.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2293618&clcid=0x409)\n\n\n\n### Anthropic\n\nAnthropic models are designed to deliver high-quality reasoning, summarization, and dialogue capabilities for enterprise use.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2340918&clcid=0x409)\n\n\n\n### Cohere\n\nA leading large language model for retrieval-augmented generation capabilities.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2341022&clcid=0x409)\n\n\n\n### Meta\n\nPre-trained, open language models ranging from 7 billion to 70 billion parameters.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316551&clcid=0x409)\n\n\n\n### Mistral AI\n\nAccelerate AI innovation and achieve state-of-the-art reasoning performance.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316454&clcid=0x409)\n\n\n\n### DeepSeek\n\nDeepSeek is a Chinese [artificial intelligence](https://go.microsoft.com/fwlink/?linkid=2316455) company that trains models at a significantly lower cost. DeepSeek R1 is now available on Foundry and GitHub.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316650&clcid=0x409)\n\n\n\n### xAI\n\nSupercharge enterprise AI with deep reasoning, domain expertise, and blazing-fast scalability with Grok.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2335633&clcid=0x409)\n\n\n\n### Black Forest Labs\n\nHarness the power of industry-leading image generation capabilities with the Flux family of models.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2321428&clcid=0x409)\n\n\n\n### Nixtla\n\nPre-trained, generative AI transformer models for time-series analysis.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2271935&clcid=0x409)\n\n\n\n### Bria\n\nBria is the developer of Visual Generative AI solutions designed for commercial use across business, product, and technology departments.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2335325&clcid=0x409)\n\n\n\n### NTT Data\n\nA high-performance, lightweight Japanese and English SLM with fine-tuning for secure hybrid deployment.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316549&clcid=0x409)\n\n\n\n### Core42, a G42 company\n\nLeading Arabic language model JAIS accelerates the growth of a vibrant Arabic language AI ecosystem.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316653&clcid=0x409)\n\n\n\n### NVIDIA NIM Microservices\n\nNVIDIA NIM is a set of easy-to-use microservices designed to accelerate the deployment of generative AI across enterprises.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316456&clcid=0x409)\n\n\n\n### Stability AI\n\nDeliver exceptional text-to-image generation with superior quality and prompt adherence.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316652&clcid=0x409)\n\n\n\n### Phi\n\nSmall language models for building generative AI applications with better latency and lower costs.\n\n[Learn more](https://azure.microsoft.com/en-us/products/phi/)\n\n\n\n### Hugging Face\n\nThousands of models spanning categories from text generation to image analysis.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316552&clcid=0x409)\n\nBack to tabs\n\n[Browse the catalog](https://go.microsoft.com/fwlink/?linkid=2335239&clcid=0x409)\n\nSecurity\n\n## Embedded security and compliance\n\n34,000\n\n> Full-time equivalent engineers dedicated to security initiatives at Microsoft.\n\n[Learn more](https://www.microsoft.com/en-us/security/security-insider/intelligence-reports/microsoft-digital-defense-report-2024?msockid=3248c14e3bdd62323e09d2f03a67633d)\n\n15,000\n\n> Partners with specialized security expertise.\n\n[Learn more](https://www.microsoft.com/en-us/security/security-insider/intelligence-reports/microsoft-digital-defense-report-2024?msockid=3248c14e3bdd62323e09d2f03a67633d)\n\n>100\n\n> Compliance certifications, including over 50 specific to global regions and countries.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2339139&clcid=0x409)\n\n[Learn more about security on Azure](https://azure.microsoft.com/en-us/explore/security/)\n\n\n\nPricing\n\n## Flexible pricing options\n\nMicrosoft Foundry offers a range of flagship models—including Azure OpenAI, Anthropic Claude, Meta, Mistral AI, DeepSeek, xAI, Cohere, HuggingFace, NVIDIA, and more—available through serverless pay-as-you-go or managed compute offerings.\n\n[See Foundry Models pricing](https://azure.microsoft.com/en-us/pricing/details/phi-3/#pricing)\n\n\n\nBENEFITS\n\n## Accelerate AI innovation\n\nPrevious Slide\n\n1. [Slide 1 indicator](https://azure.microsoft.com/en-us/products/ai-foundry/models#carousel-oc2206-0)\n2. [Slide 2 indicator](https://azure.microsoft.com/en-us/products/ai-foundry/models#carousel-oc2206-1)\n3. [Slide 3 indicator](https://azure.microsoft.com/en-us/products/ai-foundry/models#carousel-oc2206-2)\n4. [Slide 4 indicator](https://azure.microsoft.com/en-us/products/ai-foundry/models#carousel-oc2206-3)\n\nNext Slide\n\n\n\n### Task-centric model discovery\n\nExplore AI models by task and use the playground to experiment with sample queries.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2293620&clcid=0x409)\n\n\n\n### Ready-to-use fine-tuning\n\nAccelerate AI projects with ready-to-use fine-tuning pipelines—no setup needed.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2272209&clcid=0x409)\n\n\n\n### Evaluate using your own data\n\nAssess model performance using your own datasets, compare metrics, and measure improvements.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2316553&clcid=0x409)\n\n\n\n### Effortless AI deployment\n\nExperience hassle-free managed instances with automatic scaling, seamless traffic management, and secure hosting.\n\n[Learn more](https://go.microsoft.com/fwlink/?linkid=2293233&clcid=0x409)\n\nBack to BENEFITS section\n\nCUSTOMER STORIES\n\n## See who’s innovating with Foundry Models\n\n[View all Azure AI stories](https://www.microsoft.com/en-us/ai/ai-customer-stories)\n\nPrevious Slide\n\n1. [](https://azure.microsoft.com/en-us/products/ai-foundry/models#carousel-ocbd56-0)\n2. [](https://azure.microsoft.com/en-us/products/ai-foundry/models#carousel-ocbd56-1)\n3. [](https://azure.microsoft.com/en-\n\n[Content truncated - use continue_reading with url \"https://azure.microsoft.com/en-us/products/ai-foundry/models\" to see more]\n\n[Tool Call ID: tc_7],","Google","DeepSeek","xAI","Alibaba","SambaNova Systems (maybe?)"]

https://groq.com/groqcloud

GroqCloud is a high-speed AI inference platform powered by Groq's Language Processing Units (LPUs). It provides serverless API access to leading open-source foundation models from vendors like Meta and Mistral, emphasizing low latency and cost-effectiveness for real-time enterprise AI applications.

Salesforce Einstein Trust Layer

Salesforce

true

["Marketplace Subscription","Managed API","Embedded AI Services"]

Salesforce Einstein Trust Layer (powering Agentforce) is an enterprise AI gateway that provides secure access to third-party foundation models within the Salesforce ecosystem. It features data masking, toxicity detection, and audit trails to ensure compliance and privacy for generative AI applications.

SAP AI Foundation (including SAP AI Core) is a central hub for managing and orchestrating foundation models within the SAP Business Technology Platform (BTP). It enables businesses to access and integrate third-party LLMs into their business processes while ensuring governance and enterprise readiness.

NVIDIA AI Foundation Models (accessed via NVIDIA NIM) is a collection of over 80 community and NVIDIA-built models optimized for performance on NVIDIA infrastructure. It provides a standardized API for enterprises to discover and deploy models in the cloud or on-premises using NIM microservices.

Fireworks AI is a high-performance inference platform that provides low-latency access to the latest open-source foundation models. It offers an enterprise-grade API with support for fine-tuning and serverless deployment, and is increasingly available as a third-party marketplace offering on major clouds like Azure.

DeepInfra

DeepInfra

true

["Managed API","Serverless Inference"]

["Meta","Mistral AI","DeepSeek","Google (Gemma)","Zhipu AI (GLM)","Moonshot AI (Kimi)","MiniMax","Stability AI (SDXL)"]

https://deepinfra.com/models

DeepInfra is an AI inference provider focusing on cost-effective and scalable access to over 100 open-source foundation models. It is positioned as a 'budget champion' with a broad catalog of the latest models, though it primarily offers inference without advanced enterprise governance or fine-tuning.

["Meta (Llama)","Mistral AI (Mistral/Mixtral)","Hugging Face (Zephyr)"]

https://www.anyscale.com/endpoints

Anyscale Endpoints is an AI model serving platform from the creators of Ray, offering cost-effective and scalable API access to popular open-source foundation models. It is designed for production-scale AI workloads, providing both public and private endpoints with deep integration into the Ray distributed computing ecosystem.

Cerebras Inference

Cerebras Systems

true

["Managed API","Cerebras Cloud REST API","Marketplace Subscription (AWS Bedrock)","Cerebras AI Model Studio"]

["Meta (Llama)","Mistral AI (Mistral)","Zhipu AI (GLM)","Amazon (Nova)"]

https://www.cerebras.ai/cloud

Cerebras Inference (available via Cerebras Cloud and AWS Bedrock) is a high-speed AI inference platform powered by Cerebras Wafer-Scale Engine (WSE) chips. It provides extremely low-latency access to leading open-source foundation models like Llama and GLM, aimed at enterprises requiring real-time performance at production scale.

Together AI is a cloud platform optimized for open-source foundation models, offering over 200 models for text, image, and video. It provides serverless inference and dedicated GPU clusters for both research and enterprise production workloads.

Replicate (acquired by Cloudflare in 2026) provides a cloud API for running and fine-tuning over 50,000 open-source and community models. It is known for its simplicity and 'one line of code' deployment, now deeply integrated into the Cloudflare Workers AI ecosystem.

["Microsoft (Phi)","Meta (Llama)","Mistral AI","Hugging Face community models"]

https://www.bentoml.com/

BentoCloud is a unified AI inference management platform that allows teams to deploy and scale any machine learning model as a production-ready API. It features an open model catalog and emphasizes efficiency with optimized model loading and sub-second cold starts.

WaveSpeed AI is a specialized cloud platform for visual AI, offering exclusive international API access to ByteDance's flagship Kling and Seedance video/image models. It hosts over 600 visual foundation models with an emphasis on high-performance inference, zero cold starts, and early access to models from Asian AI leaders.

Vultr AI Model Stack

Vultr

true

["Managed API (NemoClaw)","Serverless Inference","Dedicated GPU Cloud"]

Vultr AI Model Stack is a specialized AI-native cloud infrastructure optimized for production-scale inference. It features the 'NemoClaw' agentic framework and provides integrated access to NVIDIA's Nemotron model family and other leading open-source LLMs on a globally distributed GPU stack.

DigitalOcean AI Platform

DigitalOcean

true

["Serverless Inference","Agent Development Kit","Managed API"]

DigitalOcean AI Platform is an AI-native cloud service providing access to over 70 open-source and frontier models via a centralized Model Catalog. It emphasizes day-zero access to new releases, intelligent model routing, and serverless inference for developers and growing businesses.

Modal is a serverless high-performance infrastructure platform that enables developers to serve AI models with minimal configuration. It features a curated Model Library and optimized runtimes for low-latency inference, supporting sub-second cold starts and instant autoscaling for diverse foundation models.

Perplexity Enterprise Agent API

Perplexity

true

["Managed API (Sonar)","Agent API (Third-party Orchestration)","Enterprise Max Subscription"]

["OpenAI","Anthropic","Meta (Llama)"]

https://docs.perplexity.ai/docs/agent-api/models

Perplexity Enterprise is an AI-powered research and orchestration platform that provides secure access to leading foundation models through its Agent API. It uniquely combines LLM reasoning with real-time web search, allowing businesses to build research-intensive applications and agents using a variety of first-party Sonar models and third-party presets.

Predibase is a developer-focused platform specialized in fine-tuning and serving small to medium-sized language models. It provides officially supported base models and a high-performance 'Turbo LoRA' inference engine for serving custom adapters at scale, now part of Rubrik's enterprise data pipeline.